本文介绍如何在springboot项目中集成kafka收发message。

pom依赖

springboot相关的依赖我们就不提了,和kafka相关的只依赖一个spring-kafka集成包

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

</dependency>

Kafka相关的yaml配置

spring:

kafka:

bootstrap-servers: 30.46.35.29:9092

producer:

retries: 3

acks: -1

batch-size: 16384

buffer-memory: 33554432

key-serializer: org.apache.kafka.common.serialization.StringSerializer

value-serializer: org.apache.kafka.common.serialization.StringSerializer

compression-type: lz4

properties:

linger.ms: 1

'interceptor.classes': com.tencent.qidian.ma.commontools.trace.kafka.TracingProducerInterceptor

consumer:

heartbeat-interval: 3000

max-poll-records: 100

enable-auto-commit: false

key-deserializer: org.apache.kafka.common.serialization.StringDeserializer

value-deserializer: org.apache.kafka.common.serialization.StringDeserializer

properties:

session.timeout.ms: 30000

listener:

concurrency: 3

type: batch

ack-mode: manual_immediate

生产者配置

1)通过@Configuration、@EnableKafka,声明Config并且打开KafkaTemplate能力。

2)生成bean,@Bean

常见配置参考:

package com.somnus.config.kafka;

@Configuration

@EnableKafka

public class KafkaProducerConfig {

@Resource

private KafkaProperties kafkaProperties;

public Map<String, Object> producerConfigs() {

Map<String, Object> props = new HashMap<>();

props.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, kafkaProperties.getBootstrapServers());

props.put(ProducerConfig.RETRIES_CONFIG, kafkaProperties.getProducer().getRetries());

props.put(ProducerConfig.BATCH_SIZE_CONFIG, kafkaProperties.getProducer().getBatchSize());

props.put(ProducerConfig.LINGER_MS_CONFIG, kafkaProperties.getProducer().getProperties().get("linger.ms"));

props.put(ProducerConfig.BUFFER_MEMORY_CONFIG, kafkaProperties.getProducer().getBufferMemory());

props.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, kafkaProperties.getProducer().getKeySerializer());

props.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG, kafkaProperties.getProducer().getValueSerializer());

return props;

}

public ProducerFactory<String, String> producerFactory() {

return new DefaultKafkaProducerFactory<>(producerConfigs());

}

@Bean

public KafkaTemplate<String, String> kafkaTemplate() {

return new KafkaTemplate<>(producerFactory());

}

}

消费端配置

1)通过@Configuration、@EnableKafka,声明Config并且打开KafkaTemplate能力。

2)生成bean,@Bean

常见配置参考:

package com.tencent.qidian.ma.maaction.web.config.kafka;

import jakarta.annotation.Resource;

import org.springframework.boot.autoconfigure.kafka.KafkaProperties;

import org.springframework.boot.autoconfigure.kafka.KafkaProperties.Listener.Type;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.kafka.annotation.EnableKafka;

import org.springframework.kafka.config.ConcurrentKafkaListenerContainerFactory;

import org.springframework.kafka.config.KafkaListenerContainerFactory;

import org.springframework.kafka.core.ConsumerFactory;

import org.springframework.kafka.listener.ConcurrentMessageListenerContainer;

import org.springframework.kafka.listener.ContainerProperties.AckMode;

/**

* KafkaBeanConfiguration

*/

@Configuration

@EnableKafka

public class KafkaBeanConfiguration {

@Resource

private ConsumerFactory consumerFactory;

@Resource

private KafkaProperties kafkaProperties;

@Bean(name = "kafkaListenerContainerFactory")

public KafkaListenerContainerFactory<ConcurrentMessageListenerContainer<String, String>>

kafkaListenerContainerFactory() {

ConcurrentKafkaListenerContainerFactory<String, String> factory =

new ConcurrentKafkaListenerContainerFactory<>();

factory.setConsumerFactory(consumerFactory());

factory.getContainerProperties().setAckMode(kafkaProperties.getListener().getAckMode());

factory.setConcurrency(kafkaProperties.getListener().getConcurrency());

if (kafkaProperties.getListener().getType().equals(Type.BATCH)) {

factory.setBatchListener(true);

}

return factory;

}

// 此bean为了后续演示使用,参考消费演示中的containerFactory属性配置

@Bean(name = "tenThreadsKafkaListenerContainerFactory1")

public KafkaListenerContainerFactory<ConcurrentMessageListenerContainer<String, String>>

tenThreadsKafkaListenerContainerFactory1() {

ConcurrentKafkaListenerContainerFactory<String, String> factory =

new ConcurrentKafkaListenerContainerFactory<>();

factory.setConsumerFactory(consumerFactory);

factory.getContainerProperties().setAckMode(kafkaProperties.getListener().getAckMode());

factory.setConcurrency(10);

if (kafkaProperties.getListener().getType().equals(Type.BATCH)) {

factory.setBatchListener(true);

}

return factory;

}

public ConsumerFactory<String, String> consumerFactory() {

return new DefaultKafkaConsumerFactory<>(consumerConfigs());

}

public Map<String, Object> consumerConfigs() {

Map<String, Object> propsMap = new HashMap<>();

propsMap.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, kafkaProperties.getBootstrapServers());

propsMap.put(ConsumerConfig.MAX_POLL_RECORDS_CONFIG,kafkaProperties.getConsumer().getMaxPollRecords());

propsMap.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG, kafkaProperties.getConsumer().getEnableAutoCommit());

propsMap.put(ConsumerConfig.SESSION_TIMEOUT_MS_CONFIG, kafkaProperties.getConsumer().getProperties().get("session.timeout.ms"));

propsMap.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, kafkaProperties.getConsumer().getKeyDeserializer());

propsMap.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, kafkaProperties.getConsumer().getValueDeserializer());

return propsMap;

}

}

SpringBoot 集成 KafkaTemplate 发送Kafka消息

@Resource

private ObjectMapper mapper;

@Resource

private KafkaTemplate<String, String> kafkaTemplate;

try {

Order order = new Order();

String message = mapper.writeValueAsString(order);

CompletableFuture<SendResult<String, String>> future = kafkaTemplate.send("order", message);

future.thenAccept(result -> {

if (result.getRecordMetadata() != null) {

log.debug("send message:{} with offset:{}", message, result.getRecordMetadata().offset());

}

}).exceptionally(exception -> {

log.error("KafkaProducer send message failure,topic={},data={}", topic, message, exception);

return null;

});

} catch (Exception e) {

log.error("KafkaProducer send message exception,topic={},message={}", topic, message, e);

}

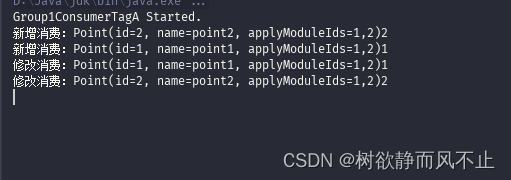

SpringBoot 集成 @KafkaListener 消费Kafka消息

max.poll.interval.ms

默认为5分钟

如果两次poll操作间隔超过了这个时间,broker就会认为这个consumer处理能力太弱,会将其踢出消费组,将分区分配给别的consumer消费,触发rebalance 。

如果你的消费者节点总是在重启完不久就不消费了,可以考虑检查改配置项或者优化你的消费者的消费速度等等。

max.poll.records

max-poll-records是Kafka consumer的一个配置参数,表示consumer一次从Kafka broker中拉取的最大消息数目,默认值为500条。在Kafka中,一个消费者组可以有多个consumer实例,每个consumer实例负责消费一个或多个partition的消息,每个consumer实例一次从broker中可以拉取一个或多个消息。

max-poll-records参数的作用就是控制每次拉取消息的最大数目,以实现消费弱化和控制内存资源的需求。

参考Kafka中的max-poll-records和listener.concurrency配置

@Resource

private ObjectMapper mapper;

@KafkaListener(

id = "order_consumer",

topics = "order",

groupId = "g_order_consumer_group",

//可配置containerFactory参数,使用指定的containerFactory,不配置默认使用名称是kafkaListenerContainerFactory的bean

//containerFactory = "kafkaListenerContainerFactory1",

properties = {"max.poll.interval.ms:300000", "max.poll.records:1"}

)

// 可以只有ConsumerRecords<String, String> records参数。ack参数非必需,ack.acknowledge()是为了防消息丢失

public void consume(ConsumerRecords<String, String> records, Acknowledgment ack) {

for (ConsumerRecord<String, String> record : records) {

String msg = record.value();

log.info("Consume msg:{}", msg);

try {

Order order = mapper.readValue(val, Order.class);

// 处理业务逻辑

} catch (Exception e) {

log.error("Consume failed, msg:{}", val, e);

}

}

ack.acknowledge();

}